Azure Landing Zones Anti-Patterns: What Goes Wrong and How to Prevent It - Part 1

Every organisation starts its Azure journey with good intentions: clean diagrams, optimistic timelines, and the belief that “how hard can a landing zone be?” Fast forward a year, and many find themselves wrestling with governance gaps, identity chaos, network rewiring, or six-figure remediation projects. In this two part edition of the Playbook, we break down the anti-patterns that drag ESLZs off the rails - and how to avoid those traps from day one. In the first part, we will cover the first 5 anti-patterns.

What’s Really at Stake Here?

Before we unpack the anti-patterns, it helps to step back and recognise what an ESLZ really represents. It’s far more than a bundle of subscriptions, networks, and policies - it’s the operating model for your entire Azure estate. It defines how every team builds, deploys, secures, and scales in the cloud.

A good ESLZ is like a well-engineered highway system with proper lanes, exits, speed limits, and signage.

A bad one is a collection of random roads built by different contractors with no map.

You can drive on both.

Only one gets you where you’re going without crashing.

The financial consequences of a poorly designed ESLZ are significant. Many organisations end up spending massive amounts just to repair foundational issues - well before factoring in:

delayed go-lives,

security gaps that turn into real incidents,

developers losing productivity fighting the platform.

Simply put, investing in a solid design upfront is far cheaper than paying for a full rebuild later.

Right then. Let’s talk about the mistakes that cause the most pain.

Anti-Pattern #1: Treating the ESLZ as an IT-Only Design Exercise

We’ve all seen this movie.

The infrastructure team vanishes into a conference room with the Cloud Adoption Framework and reappears three weeks later holding a spotless, picture-perfect architecture diagram.

Everything looks flawless:

Management groups? Absolutely spotless.

Networking? As smooth as freshly made filter coffee.

Policies? Honestly, a work of art.

And then reality walks in.

Legal raises data-residency concerns.

Compliance brings up requirements no one had on their radar.

Business teams point out workloads that don’t fit the shiny new model at all.

Finance asks how this design supports cost allocation - and the room suddenly goes silent.

Just like that, the “perfect” architecture starts looking a lot less perfect.

The Perspectives That Went Missing

An ESLZ doesn’t impact just IT - it touches every corner of the organisation. When the design happens in isolation, important viewpoints get missed, and the blind spots show up later. The usual gaps include:

Business priorities:

Which workloads are mission-critical? Where is the organisation expanding? Which regions matter for the next 3 - 5 years?

Compliance requirements:

What regulations apply? Are there industry-specific mandates? What’s the data sovereignty position?

Data residency:

Where must data be stored? Which regions are restricted? How do DR and backup policies align?

Financial structure:

How will costs be allocated or charged back? Who owns the budgets? How does this tie into existing processes?

Application patterns:

Microservices? Legacy systems? Multi-region deployments? Different teams operate very differently.

Miss these inputs, and your ESLZ becomes a beautifully engineered platform that nobody can actually use.

The Better Way

Get everyone involved upfront:

Security

Compliance

Legal

Finance

Key business units

Yes, the conversations take longer. Yes, the design becomes more complex. But complexity uncovered early is manageable.

Complexity uncovered later becomes a long, expensive re-architecture.

This is one of those rare cases where “measure twice, cut once” isn’t just good advice -

it’s how you save time, money, and a lot of headaches.

Anti-Pattern #2: The "Everything in One Subscription" Model

Some organisations start with a single subscription or a handful of large "shared" subscriptions where multiple teams and applications coexist. It seems simpler initially - fewer subscriptions to manage, less overhead, everything in one place.

Why This Hurts:

Subscriptions are Azure's primary isolation boundary. Collapsing everything into one or two subscriptions means:

No workload isolation: A misconfiguration in one team's resources can impact another team's production systems

Governance complexity: Trying to apply different policies to different applications in the same subscription requires complex resource-tagging schemes and conditional policies

Cost chaos: Allocating costs between teams, projects, or business units becomes a monthly spreadsheet nightmare

Access control mess: RBAC becomes overly broad or requires granular per-resource assignments that don't scale

Lifecycle problems: Decommissioning one application without affecting others requires careful resource tracking

Limit exhaustion: Azure subscription limits (networking, compute, storage quotas) get hit faster

The Multi-Subscription Model

In a mature ESLZ, subscriptions aren’t an afterthought - they’re deliberate boundaries. Well-designed environments use subscriptions to cleanly separate responsibilities, risk, and governance. Typically, they align to:

Environment separation: Dev, Test, and Prod each live in their own subscription.

Application boundaries: Major applications or platforms get their own subscription(s) so they can evolve independently.

Compliance zones: Workloads with different regulatory requirements stay isolated by design.

Cost allocation: Subscriptions map neatly to cost centres, departments, or business units.

Blast radius control: A mistake or outage in one subscription doesn’t ripple across the entire organisation.

Yes, this means more subscriptions. For a mid-sized organisation, 20–100 subscriptions is completely normal. That number feels intimidating only until you realise what you get in return:

clean isolation, clear owners, predictable billing, simpler governance, and far fewer surprises.

It’s the difference between organised lanes on a highway versus everyone trying to squeeze into the same one.

Anti-Pattern #3: Treating Identity Design as an Afterthought

This one happens far too often.

Networking gets detailed diagrams. Management groups get whiteboard sessions. Policies get workshops.

Then someone casually brings up identity and access management, and the room responds with:

“We’ll just use Entra ID… we’ll figure it out later.”

That “later” is usually when something breaks.

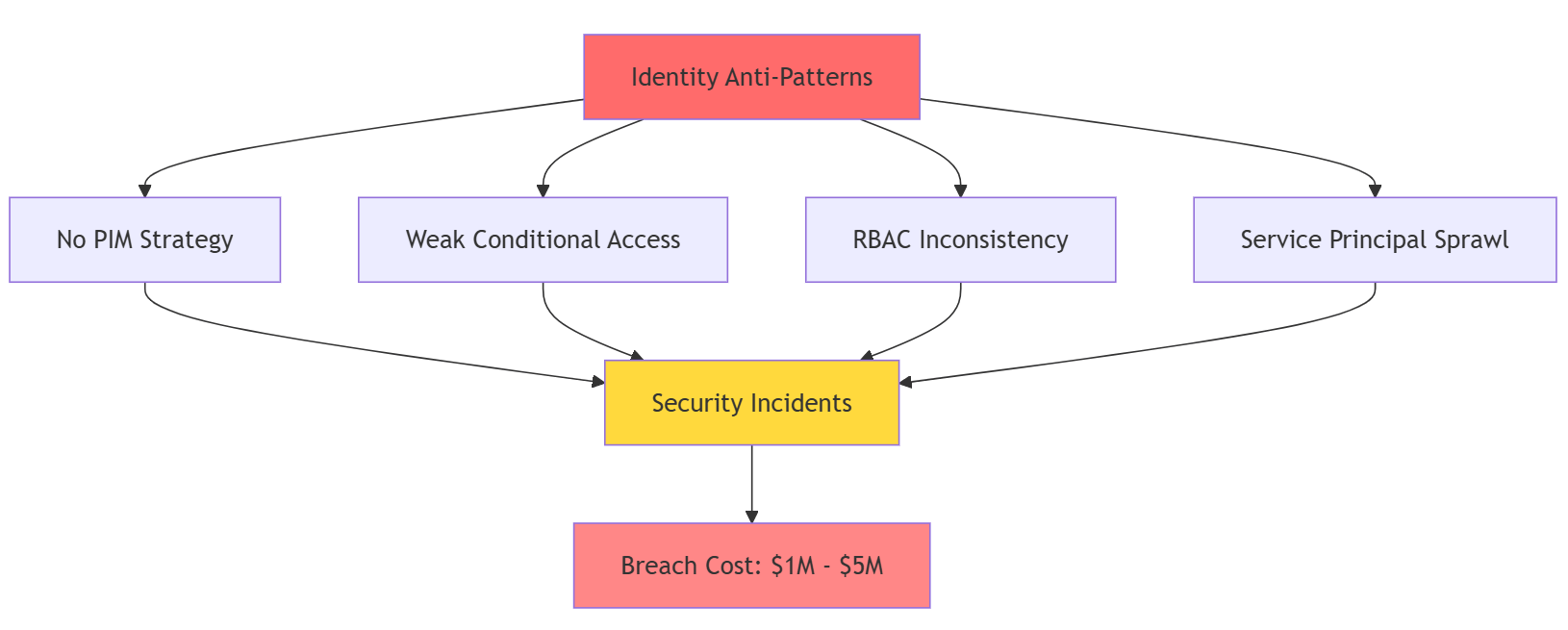

The Security Gap

In the cloud, identity is the new perimeter. If identity isn’t designed intentionally, you’re left with gaps that attackers love and admins hate:

Privilege creep: Permissions pile up over the years with no cleanup.

No break-glass access: When Entra ID misbehaves, nobody can manage Azure.

Weak authentication: No Conditional Access, inconsistent MFA, and privileged roles wide open.

No Privileged Access Management: Permanent admin rights instead of just-in-time elevation.

Confusing RBAC: Role assignments differ wildly across subscriptions.

Service principal sprawl: Apps with overly broad permissions and secrets that rarely (if ever) rotate.

Identity isn’t something you “work out as you go.”

It’s the control plane for your entire cloud estate - and when it’s weak, everything built on top becomes weaker too.

The Identity Foundation

Before deploying anything - even your first test workload - you need the identity groundwork in place. At minimum have:

Entra ID tenant strategy:

Single tenant or multi-tenant? How will future acquisitions integrate?RBAC model:

Which roles are used, how they’re assigned, and who approves them.Privileged Identity Management:

Time-bound elevation and audit trails for all administrative actions.Conditional Access policies:

Enforced MFA, compliant devices, and location-based rules.Break-glass accounts:

Locked down, monitored, and ready for emergencies.Service principal lifecycle:

Creation standards, permission reviews, credential rotation, and clean decommissioning.

This isn’t optional “best practice.”

It’s the difference between containing a potential incident in minutes… versus discovering a breach months later during a routine audit and scrambling to understand what went wrong.

Anti-Pattern #4: Underestimating Network Complexity

It always starts the same way:

Someone sketches a clean hub-and-spoke diagram - hub subscription, firewall in the middle, spokes neatly peered. Looks simple. Looks elegant. Looks… nothing like real life.

Because once you start deploying at scale, the simplicity evaporates.

Where the Real Complexity Appears

Enterprise networking isn’t just “a hub and a few spokes.” Very quickly, you find yourself dealing with:

Multiple on-prem data centres needing ExpressRoute connectivity

Partner networks requiring secure, segregated access

Centralised internet egress through firewalls

Hundreds (sometimes thousands) of Private Endpoints

VPN for remote access and teams in different regions

Virtual WAN vs traditional hub-and-spoke decisions

Hybrid DNS resolution that actually works

Segmentation for compliance and isolation

DDoS protection and monitoring

Traffic inspection, logging, and route control

Start with ad-hoc peering and “we’ll figure it out later,” and you’ll end up with a patchwork network that’s painful - and expensive - to unwind.

The Up-Front Design Investment

A robust network architecture takes a bit of time, but it saves enormous effort later. Before deploying workloads, plan for:

Topology choice:

Traditional hub-and-spoke or Virtual WAN?

Tip: Virtual WAN shines for global or multi-region organisations.IP address planning:

Allocate generous address space. Over-design now; avoid re-IP later.Connectivity model:

ExpressRoute, VPN, or a hybrid?

What circuits? What redundancy? What failover?Segmentation:

How are compliance zones isolated?

Which workloads can talk to which?PaaS connectivity:

A Private Link strategy that won’t explode into chaos.Firewall and inspection placement:

What traffic is inspected? Where? How does it scale?DNS architecture:

Hybrid name resolution that supports on-prem + Azure seamlessly.

For a complex organisation, this planning takes 4–6 weeks.

That feels long - until you compare it with six months and hundreds of hours spent fixing a fragmented network later.

Good networking isn’t “plumbing.”

It’s architecture. And doing it properly upfront pays for itself many times over.

Anti-Pattern #5: Building Your ESLZ Through ClickOps

It usually starts innocently.

Someone opens the Azure portal, clicks through the wizards, configures networking, sets up policies, tunes diagnostics - and everything looks good. So that becomes the “official” ESLZ.

Six months later, the headaches begin.

The Reproducibility Problem

When the ESLZ is built by hand, the environment becomes a mystery box. Questions pop up like:

Why is this policy configured this way?

What was the original logic behind this network design?

Can we recreate this in another region?

How do we test changes safely?

What changed right before that outage?

And the answer is often the same:

“We don’t know.”

All the decisions lived in someone’s head during ClickOps - and disappeared once the deployment was done.

Why Infrastructure-as-Code Isn't Optional

A modern ESLZ must be deployed and managed as code. Not as portal clicks. Not as tribal knowledge.

That means:

Bicep, Terraform, or ARM templates defining the entire foundation

Version control capturing every change, with reasoning

CI/CD pipelines deploying infrastructure consistently across environments

Policy as code managing Azure Policy definitions and assignments

Documentation as code keeping diagrams and architecture notes in sync

For a mature organisation, this isn't a “nice to have.”

It’s how you run cloud infrastructure safely at scale.

The Real Benefits

IaC pays for itself almost immediately:

You can test platform changes in non-prod

You can recreate the landing zone in a new region or tenant

Peer review catches issues before they blow up production

Rollbacks are possible when changes go sideways

Compliance audits get a complete, timestamped change trail

Setting up the tooling and pipelines might take 2–3 extra weeks, but the long-term payoff is enormous - fewer outages, faster changes, predictable deployments, and no more “Who clicked what?” mysteries.

Quick Check: Are You Hitting These Anti-Patterns?

Did IT design the ESLZ alone without compliance, finance, or business input?

Are most workloads consolidated into 1-3 large shared subscriptions?

Was identity "figured out as we went"?

Is the network "simpler than expected" with minimal planning?

Was the ESLZ built through the portal instead of code?

If you answered "yes" to any of these, it's not too late - but addressing them now costs far less than waiting.

Stay tuned for second part …

That wraps up Part 1 of our Azure Landing Zones Anti-Patterns series.

We’ve tackled the foundational missteps - the IT-only design trap, the single-subscription model, weak identity foundations, underestimated network complexity, and the ClickOps-built landing zone. These are the cracks that quietly shape the fate of your ESLZ long before workloads land.

In Part 2, we continue with the next set of anti-patterns - just as common, just as costly, and just as important to avoid.

If you found this useful, tap Subscribe at the bottom of the page to get future updates straight to your inbox.