1. Setting the Scene

1.1 Why Pilots Always Look So Good - And Why That’s the Problem

Imagine you are learning to cook. You invite friends over, you pick a recipe you know inside out, you buy the freshest ingredients from a speciality shop, and you spend a Sunday afternoon preparing everything perfectly. The dinner is a success.

Now imagine you are asked to run a restaurant kitchen on a Tuesday evening with three chefs calling in sick, ingredients delivered late, and fifty different orders coming in at once. Same recipe. Very different outcome.

That is exactly what happens when an AI pilot hits the real world.

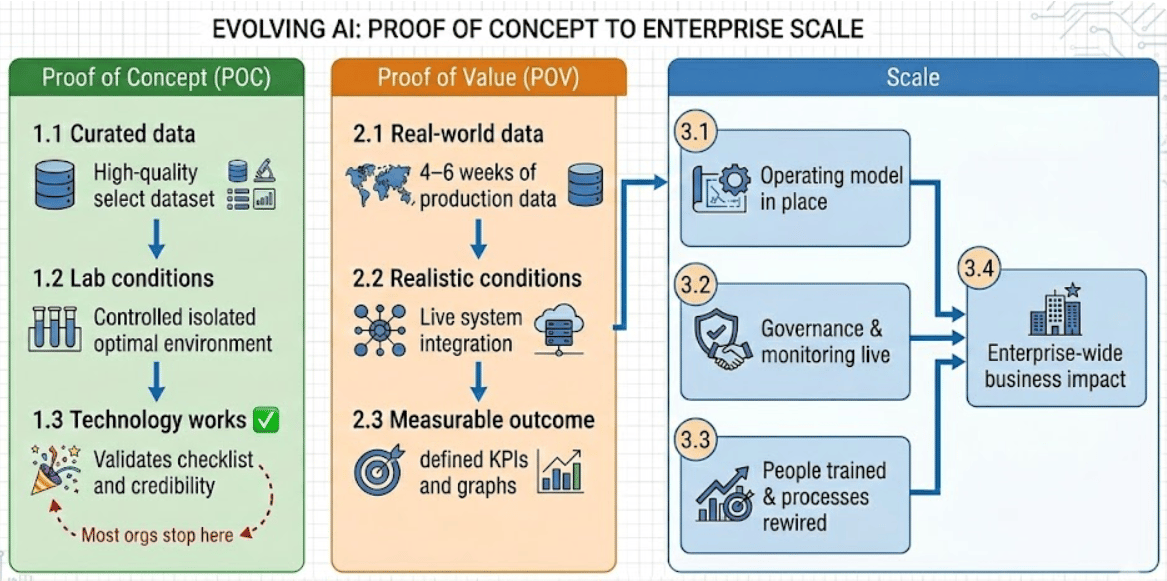

During a proof of concept, the team picks a focused use case, curates a clean dataset, builds the environment carefully, and tests against data they mostly understand. Everything is controlled. Of course it works well - it was designed to work well in those conditions.

Production is not like that. Production has thirty-year-old data nobody fully understands. It has twelve different source systems that were never designed to talk to each other. It has users who do things the model was never trained to handle. And it has business processes that change, regulations that evolve, and timelines that compress.

The jump between these two states is where most projects break. And the gap is bigger than most people realise going in.

1.2 The Numbers That Should Be on Every Boardroom Slide - But Aren’t

There is a reality that rarely appears in the slide deck after a successful AI pilot: most enterprise AI projects do not make it to production.

The problem is usually not the model. In a controlled pilot, many generative AI solutions work well. The real challenge appears when organisations try to scale those pilots without changing how they design, operate, and govern the system.

A proof of concept shows that something can work.

Production requires it to work reliably in real conditions.

Multiple independent studies show the same pattern:

MIT NANDA Initiative

Reviewed 300+ public AI deployments and surveyed 350 practitioners.

About 95% of generative AI pilots did not create measurable impact on profit and loss.

This is happening while enterprises invest an estimated USD 30 to 40 billion each year in generative AI.S&P Global (2025 Survey)

Surveyed more than 1,000 enterprises across North America and Europe.

42% abandoned most AI initiatives in 2025, up from 17% in 2024.

On average, 46% of AI proofs of concept were stopped before production.Gartner

Estimates that fewer than half of AI projects move beyond the pilot stage.RAND Corporation

Estimates overall AI project failure rates above 80%, roughly double traditional non-AI technology projects.

These are not isolated examples. They show a clear gap between experimentation and execution.

Many organisations can prove that the technology works.

Fewer can make it work reliably at scale.

Across Azure deployments in banking, manufacturing, and government, the difference is consistent. Projects that stall treat AI as a small innovation effort. Projects that succeed treat AI as part of the core operating model.

The difference comes down to architecture.

Scaling AI means connecting it properly to identity systems, governance controls, monitoring tools, cost management, and data management processes. These areas are often secondary during a pilot. In production, they become critical.

Before starting the next proof of concept, leaders should ask a simple question:

Are we just proving that the model works, or are we building a system that can run in production?

That answer determines whether the initiative becomes a real capability or another statistic.

2. Foundations you can’t skip

2.1 The Data Problem Nobody Wants to Budget For

In almost every enterprise AI programme, the same issue surfaces: the data is not ready.

According to Informatica’s 2025 CDO Insights survey, the top obstacles to AI success are:

Data quality and readiness - 43%

Lack of technical maturity - 43%

Skills shortage - 35%

Data quality is not a secondary issue. It is the primary execution constraint.

Yet many organisations prioritise model selection and API integration over data engineering. The result is predictable: pilots perform well on curated datasets, but production struggles under real-world variability.

Programmes that succeed invert the ratio. They allocate 50–70% of time and budget to data readiness:

Extraction across fragmented systems

Schema normalisation

Governance and metadata

Quality validation and trust scoring

Retention and compliance controls

They treat data as infrastructure.

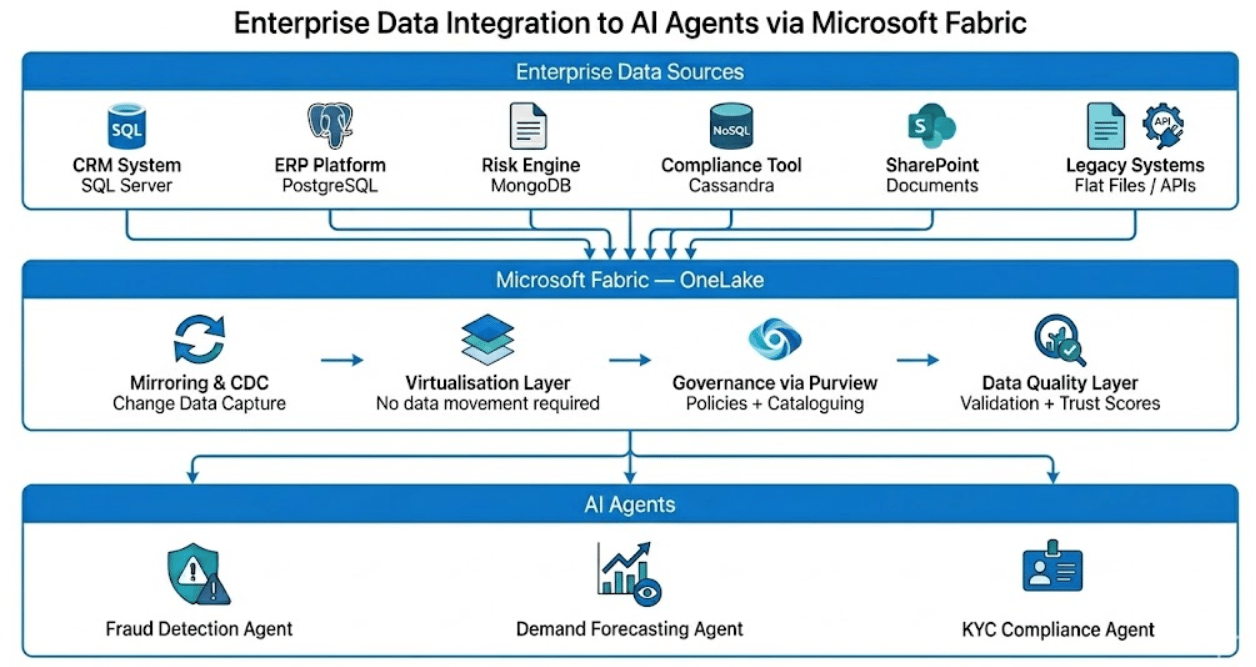

Consider a mid-sized bank. Corporate, retail, investment, and customer service divisions may each run 8–24 systems: loan platforms, CRM tools, risk engines, compliance databases. Data spans SQL Server, PostgreSQL, MongoDB, Cassandra, SharePoint, and legacy flat files. Each system has different schemas, quality profiles, and update cycles.

In a pilot, the model sees a cleaned slice.

In production, it must operate across the full estate, continuously and in near-real time.

The gap is architectural.

This is where Microsoft Fabric and OneLake become operationally relevant.

Instead of heavy batch ETL into a central warehouse that begins aging immediately, OneLake uses mirroring and Change Data Capture to keep data synchronised across sources without unnecessary duplication. Governance via Purview and built-in data quality layers enforce trust and policy before agents consume the data.

For use cases like fraud detection or risk scoring, a three-hour delay is not a minor issue. It invalidates the decision.

At that point, the question is not model accuracy. It is whether the data substrate can sustain decision-grade freshness at scale.

2.2 The Legacy Integration Problem Nobody Budgets For Either

If data quality blocks scaling first, legacy integration is often the second constraint - and it usually arrives unexpectedly.

Most enterprises run IT estates built over decades. Core systems from the 1990s. Heavily customised ERP platforms. Middleware few people fully understand. APIs documented once and never updated. Many of these systems expose only batch interfaces or outdated integration methods, making real-time interaction difficult.

A research indicates that 41% of enterprises cite legacy IT architecture as a direct blocker to AI deployment.

The challenge is not just reading from these systems. Agentic workflows require both read and write capabilities. Writing back in a way that is auditable, reversible, and compliant with data integrity rules designed long before modern APIs existed is where risk escalates.

Integration often relies on heterogeneous formats such as CSV, XML, EDI, or flat files, with no consistent schema standard. An AI agent integrating directly into such an environment operates inside undocumented logic and brittle dependencies.

The pattern that works is architectural separation.

Introduce an abstraction layer between AI agents and legacy systems.

Agents interact with a governed interface that handles translation, validation, and enforcement. They never directly touch the legacy core.

For sensitive write-backs, a human-in-the-loop review before system commit adds an additional control layer.

In Microsoft-centric environments, this pattern typically combines:

Azure API Management to standardise access

Azure Event Hubs for controlled event streaming

Microsoft Fabric mirroring for governed data access

Scaling AI safely is not about model intelligence. It is about isolating legacy complexity behind controlled architectural boundaries.

2.3 Cybersecurity and Model Risk: The Barrier Most Teams Underestimate

Data quality receives attention. Cybersecurity and model risk often do not.

A research covering 450+ enterprises found that 51% cite cybersecurity and model risk as a top barrier to scaling AI.

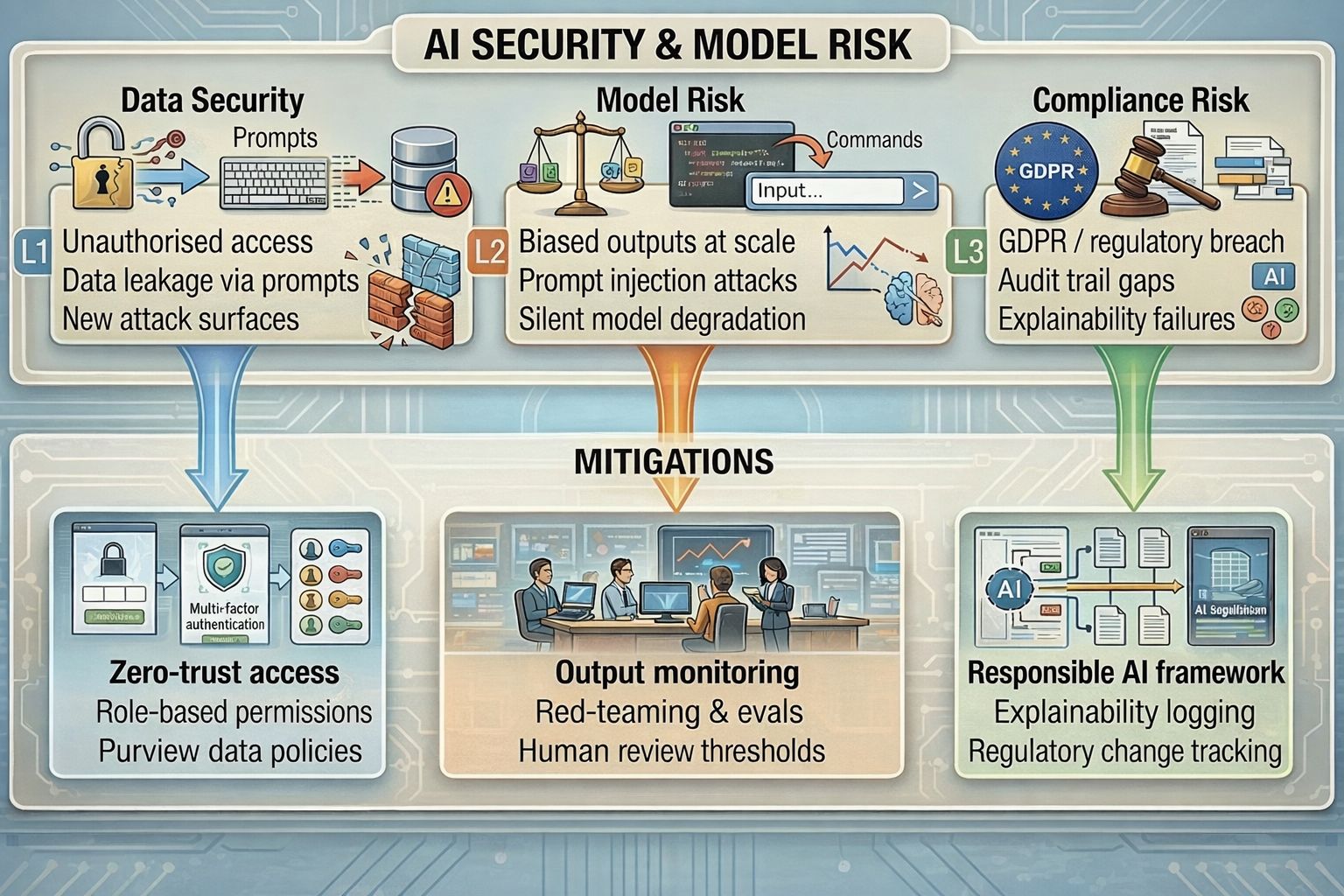

The risks fall into three categories:

Data Security

AI systems expand attack surfaces. Agents connected to HR, finance, or customer data introduce new exposure points, including prompt-based data leakage and unauthorised access.

Model Risk

Outputs may be biased, manipulated through prompt injection, or degrade silently over time. At scale, small inaccuracies compound quickly.

Compliance Risk

High-risk use cases require audit trails, explainability, and regulatory alignment. Gaps in logging or oversight create direct exposure under frameworks such as the European Union Artificial Intelligence Act.

Mitigation must be architectural:

Zero-trust access with role-based permissions and data classification policies

Output monitoring dashboards, red-teaming, and defined human review thresholds

A Responsible AI framework with explainability logging and regulatory change tracking

Tools such as Microsoft Purview support classification, lineage, and policy enforcement. Observability tooling enables monitoring and evaluation pipelines. But defining escalation thresholds and oversight models is an organisational decision.

Scaling AI without structured risk controls does not accelerate value. It multiplies exposure.

2.4 The Agentic AI Problem Nobody Is Talking About Loudly Enough

The conversation around enterprise AI has moved fast. Eighteen months ago, most deployments were what you might call single-agent systems: tools that could search documents, generate summaries, draft emails. Useful, but limited. Today, the serious deployments involve multiple agents working together - one retrieving data, another analysing it, a third triggering an action in a downstream system.

This is what "agentic AI" means in practice. Not one chatbot. A network of specialised agents, working autonomously and in coordination, to handle complex multi-step tasks.

Here is the reliability problem that does not get enough airtime.

Imagine a chain of three agents. If each agent is 90% reliable on its own - which is actually quite good - what is the reliability of the whole chain?

Not 90%. It is 0.9 × 0.9 × 0.9 = 72.9%.

Add a fourth agent at the same reliability, and you are at 65.6%. The compounding effect is significant, and in regulated industries where an incorrect output in a compliance workflow or a risk assessment could carry real consequences, that gap matters enormously.

This is not an argument against multi-agent architectures - they are genuinely powerful. It is an argument for investing in the monitoring, reliability engineering, and continuous improvement infrastructure that keeps agent performance improving over time, not just at launch.

This is also why operationalising AI is not a one-time event. Data drifts as the world changes. Models need to be updated when regulations shift or when the business evolves. An agent that is performing well today may degrade quietly next quarter unless someone is watching.

Think about it the way you would think about running infrastructure. When a critical server goes down at 3am, someone gets paged. When a database query starts timing out, there is an alert. Enterprise AI needs the same treatment - observability, alerting, incident response, and continuous improvement cycles. Without it, you are not running AI in production. You are hoping nobody notices when it quietly stops working well.

3. What Scaling Actually Means

3.1 What “Scaling AI” Actually Means (It Is Not What Most People Think)

When most leaders talk about scaling AI, they usually mean expansion.

More use cases.

More users.

More automation embedded into workflows.

That interpretation is understandable, but incomplete.

Scaling AI is not about increasing the surface area of experimentation. It is about building the infrastructure, governance model, and operating discipline required for AI to function reliably across the enterprise. The difference is structural.

A pilot proves that a model can solve a problem. Scaling requires that the solution operate consistently across business units, data domains, regulatory environments, and workload patterns. This moves AI from innovation to enterprise capability.

The 2025 State of AI survey from McKinsey & Company illustrates the gap clearly. While 88% of organisations report using AI in at least one business function, only 39% report any measurable impact at the enterprise EBIT level. Among those reporting impact, most attribute less than 5% of EBIT to AI.

The signal is clear. Adoption is widespread. Enterprise-level financial impact is not.

Nearly two-thirds of surveyed organisations have not begun scaling AI across the enterprise. They remain in an intermediate state. Pilots exist. Results appear promising in isolated contexts. However, the architectural, operational, and governance model required for enterprise-wide deployment has not been established.

For architects and technical leaders, this distinction matters. Scaling is not horizontal growth. It is systemic integration.

Enterprise scaling requires maturity across three layers. All three must be functional before the phrase “scaled AI” carries operational meaning:

Infrastructure Layer

Compute, networking, identity integration, data pipelines, model hosting environments, observability, and cost management must be production-grade. This includes resilience, security boundaries, and performance baselines.Data Layer

Harmonised data models, governed access, lineage tracking, quality monitoring, and near-real-time synchronisation with operational systems. AI systems are only as reliable as the substrate they depend on.Operating Model Layer

Defined ownership, lifecycle management, monitoring of model drift, retraining workflows, risk controls, compliance oversight, and measurable business KPIs tied to AI outputs.

If any one of these layers is immature, scaling stalls.

Scaling AI is therefore not a question of volume. It is a question of organisational readiness. It requires designing AI as an enterprise function, not a feature release.

The organisations that cross the EBIT threshold do not simply deploy more models. They redesign how AI integrates into the operating fabric of the business.

Most organisations focus almost entirely on Layer 2 - the AI part. They pick models, evaluate APIs, choose between open-source and proprietary options. This is not wrong, but it is putting the second floor on before the foundations are set.

McKinsey's 2025 AI survey confirms that organisations reporting "significant" financial returns are twice as likely to have redesigned end-to-end workflows before selecting modelling techniques. The technology layer matters. It just matters less than most people assume when the other two layers are not in place.

4. Making the Business Case Stick

4.1 The Proof of Value Question - And Why It Matters More Than the POC

There is an important difference between a proof of concept and a proof of value. Many organisations treat them as the same thing.

A proof of concept asks a simple question: can the technology work?

In most cases today, the answer is yes. The models work. The tools are available. The cloud infrastructure is ready. Showing that the system can generate the right output is usually not difficult.

A proof of value asks a different question: does this create real business benefit in day-to-day operations?

That question is harder.

It requires real data, real users, real timelines, and real constraints. It requires measuring outcomes such as cost savings, faster decisions, reduced errors, or improved revenue.

A proof of concept shows that something is possible.

A proof of value shows that it is worth doing.

A practical approach that works well is simple.

Before rolling out to full production, run the model or agent against one full month of real production data.

Not a cleaned extract.

Not a carefully selected sample.

Actual data from live systems, with all its inconsistencies, missing fields, and edge cases.

Observe where the model struggles.

Identify which data sources create the most errors.

See how it behaves under real volumes and real timelines.

Then fix those issues before scaling further.

This step may add a few weeks to the project timeline, but it significantly increases the chances that production behaves the way the pilot did.

It turns optimism into evidence.

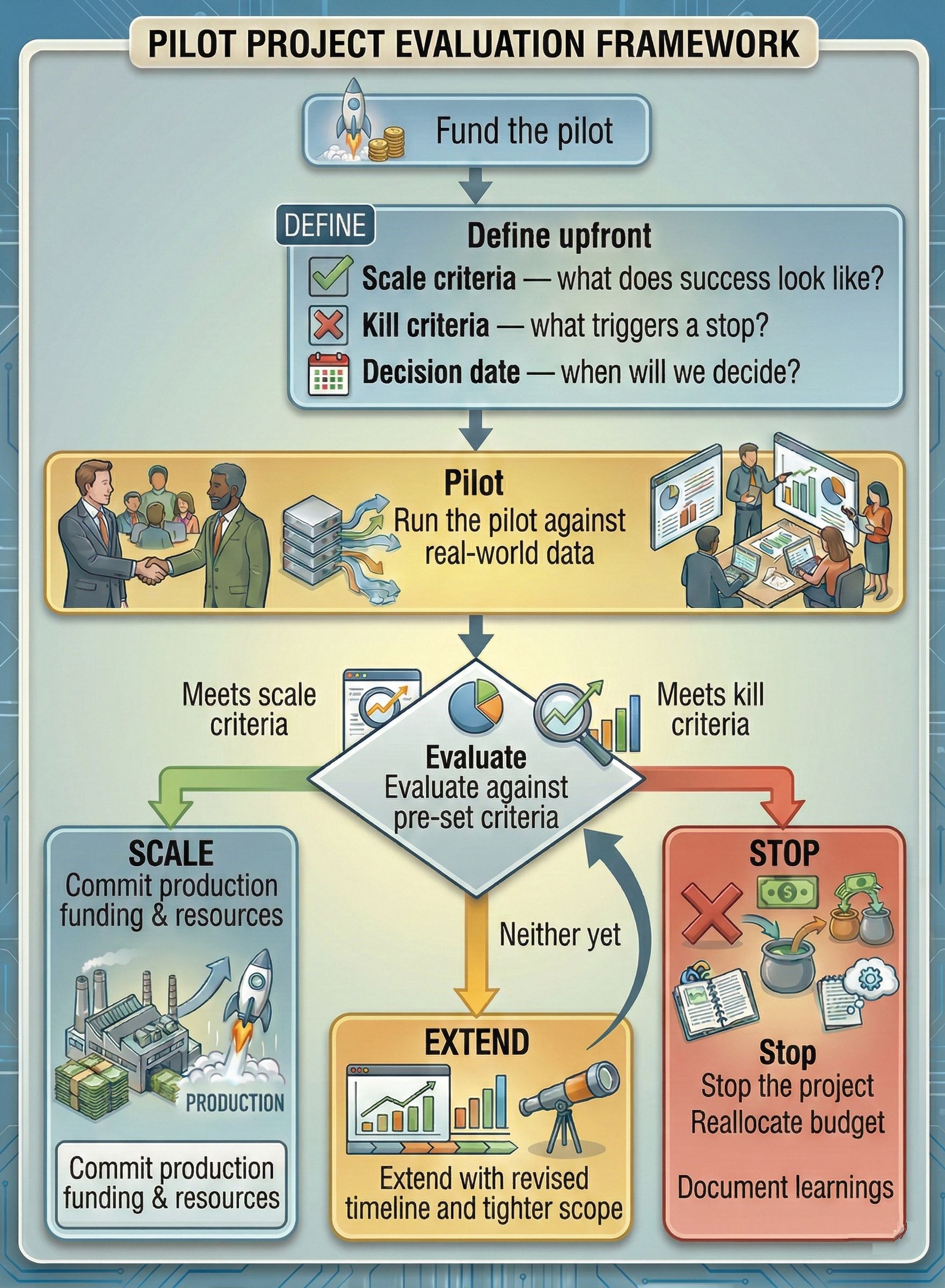

4.2 Funding AI the Right Way: Kill Criteria Matter as Much as Launch Criteria

There is an uncomfortable reality in enterprise AI programmes: organisations are far better at deciding when to start a pilot than when to stop one.

The result is initiatives that technically pass proof of concept but never convert into production value. They are not shut down, but they are not scaled either. They drift.

The difference between organisations that scale and those that accumulate half-finished pilots is capital discipline.

Before funding begins, define:

Scale criteria - what measurable outcome justifies production investment?

Kill criteria - what performance threshold triggers termination?

Decision date - when will the call be made?

Without this pre-commitment, evaluation becomes subjective and inertia takes over.

More than 80% of enterprises plan to increase AI budgets over the next two years. Budget expansion alone, however, does not produce business value. Without explicit scale and stop conditions tied to measurable outcomes, additional funding often sustains more pilots rather than accelerating production deployment.

The kill-criteria conversation feels pessimistic. In practice, it is a portfolio management control. It keeps capital aligned to production-ready use cases, prevents sunk-cost bias, and ensures that AI investment behaves like disciplined infrastructure strategy rather than experimentation at scale.

4.3 A Quick Word on Cost and Why It Stops Scaling

This comes up consistently in real deployments. Cost management in enterprise AI is genuinely harder than it looks.

A single AI use case running at modest scale might cost $10,000–$50,000 USD per month in compute and inference costs, depending on model selection, query volume, and data processing requirements. Multiply that by fifty use cases across a large enterprise, and you are looking at a material technology spend that needs to be justified with equally material business outcomes.

The organisations that get this right do not ask "what is the ROI of this specific use case?" for every single agent they deploy. That question, while sensible in isolation, becomes a bureaucratic bottleneck that slows everything down. Instead, they establish a shared AI infrastructure - the data foundation, the governance layer, the model catalogue - and then measure business impact at the portfolio level. The ROI of the shared infrastructure is calculated across the whole set of use cases it enables, not per-use-case.

This is actually one of the structural advantages of the Fabric + Foundry architecture. Once the data and governance infrastructure is in place, the marginal cost of adding new use cases drops significantly. The expensive foundation work does not need to be redone for each deployment.

5. The Human Side

5.1 The People Problem Is the Hardest One

Everything discussed so far - data foundations, model governance, agent reliability, observability - is solvable with the right engineering investment. The people challenge is harder to solve with a purchase order.

A Harvard study cited in enterprise AI research puts 90% of transformation failure down to people, process, and culture - with technology accounting for only 10% of the challenge.

Large organisations carry decades of institutional knowledge. They have data that was created by applications nobody uses anymore, for reasons nobody fully remembers. That context - which data means what, why certain fields are populated in unusual ways, what a specific status code actually signifies in practice - lives in people. It does not live in the data dictionary. It does not live in the schema documentation. It lives in the person who has worked with that system for twelve years.

If those people are not actively involved in designing and validating AI systems, the systems will be technically correct and contextually wrong. And a contextually wrong AI in a consequential workflow is worse than no AI at all.

Beyond contextual knowledge, there is the broader adoption challenge.

Research from Boston Consulting Group suggests that AI can help professionals reclaim 26% to 36% of their time in routine, task-heavy work. The productivity upside is meaningful, but only if the tools are actually used.

Deployment without adoption is expensive shelf-ware.

Many organisations introduce AI as an optional layer on top of existing workflows. The underlying process remains unchanged, so employees can continue working the old way. In that model, adoption depends on individual motivation rather than structural design.

Organisations that generate measurable impact take a different approach.

They redesign workflows so AI becomes part of the default operating path.

They adjust approval flows and decision gates to incorporate AI outputs.

They invest in upskilling at scale, not just for data scientists, but for the operational teams consuming AI results daily.

AI value does not come from access to tools. It comes from embedding those tools into how work actually gets done.

6. Running It Like a Business

6.1 What Good Looks Like - The Operating Model

When AI is working well at enterprise scale, it looks less like a technology rollout and more like a business function. There is ownership. There is measurement. There is continuous improvement. There are escalation paths when things go wrong.

Measurement needs to happen at all four levels simultaneously:

Data layer: Is the data quality improving? Are the pipelines keeping up with source system changes? Is new data being catalogued and governed appropriately?

AI layer: Are agent accuracy and reliability trending in the right direction? Where are hallucinations occurring and why? Which agents in the chain are the weakest links?

People layer: Are adoption rates growing? Are business users trusting the outputs? Is time genuinely being reclaimed, or are people still doing the tasks manually as a backup?

Business layer: What is the actual USD impact on process efficiency? What is the cost reduction in the functions where AI is deployed? What does the revenue impact look like in the use cases where it is relevant?

Without measurement at all four levels, it is easy to have impressive AI layer metrics while the business is seeing no tangible benefit - or conversely, to see business improvement without knowing which data quality issues are quietly building up underneath.

7. The Road Ahead

7.1 Pulling It Together: The Path Worth Taking

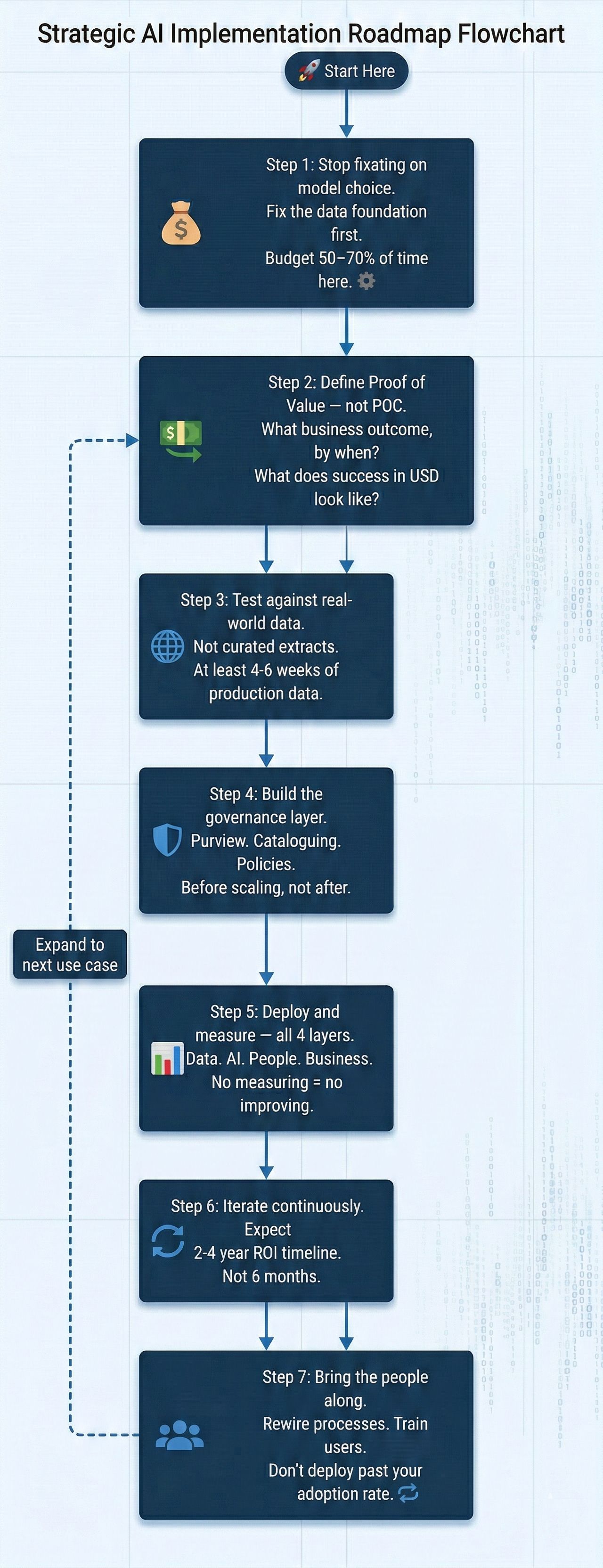

None of this is simple. But the path is clearer than it looks from the outside. Here is the practical sequence:

Final Thoughts

AI is gradually becoming the operating system of the modern enterprise.

That framing is more useful than it might first appear. An operating system is not optional. It is not a feature you can skip. It is the foundation on which everything else runs - and it requires serious investment, ongoing maintenance, and a clear understanding of what it enables.

But operating systems also have to be reliable. They have to handle the unexpected gracefully. They have to be governed properly so that the applications running on top of them behave safely. And they have to be understood by the people who use them daily, not just the engineers who built them.

AI adoption reached 78% of enterprises in 2025, delivering up to 55% productivity gains and $3.70 ROI per dollar invested in the deployments that get it right. The gap between those numbers and the failure statistics cited at the start of this post is not a technology gap. It is an execution gap. It is a discipline gap. It is a data gap.

The organisations closing that gap are not the ones with the most sophisticated models or the largest GPU clusters. They are the ones that built the right foundation, put the right governance in place, brought their people along for the journey - and then had the patience and discipline to measure, iterate, and improve.

That is what moving AI from pilot to production actually looks like. It is not glamorous. It does not make for a great keynote slide. But it is what works.

Acknowledgements & Research Sources

This article draws on insights from a podcast discussion between Srini Kompella (HCLTech) and Jeeva AKR (Microsoft), moderated by Dr. Andy Packham.

Research references include:

Massachusetts Institute of Technology NANDA - State of AI in Business 2025

McKinsey & Company - State of AI 2025

Boston Consulting Group - Build for the Future 2025

S&P Global Market Intelligence - 2025 AI Survey

Gartner - AI Project Research 2024–2025

RAND Corporation - AI Implementation Study 2024

Informatica - CDO Insights 2025

If you found this useful, tap Subscribe at the bottom of the page to get future updates straight to your inbox.