Model Router in Azure: A Strategic Approach to Multi-Model AI

If you’ve been building AI solutions on Azure lately - especially with Azure AI Foundry (now rebranded to Microsoft Foundry) - you've probably realized something: no single model is perfect for every task.

Some are great at reasoning, some excel at speed, some are lightweight enough for cost-sensitive workloads, and some provide the best grounding and retrieval performance.

Few years ago, choosing an AI model was simple: one model → one endpoint → one bill.

Today? You’re dealing with:

GPT-4.1 for reasoning

Claude Sonnet for analysis

Llama or Phi for lightweight tasks

DeepSeek for efficiency

Domain models that appear every month

Every model has different strengths, pricing, speed, limits, and quirks.

And customers want a single AI experience - not a maze of models stitched together with brittle orchestration logic.

This is exactly where Model Router steps in.

1. What Exactly Is Model Router ?

Think of it like this:

Model Router is your smart traffic controller for AI models. Instead of sending every request to one model, it automatically chooses the best model for each task - fast, cheap, or high-quality - so you get the right balance of performance and cost without doing any heavy engineering yourself.

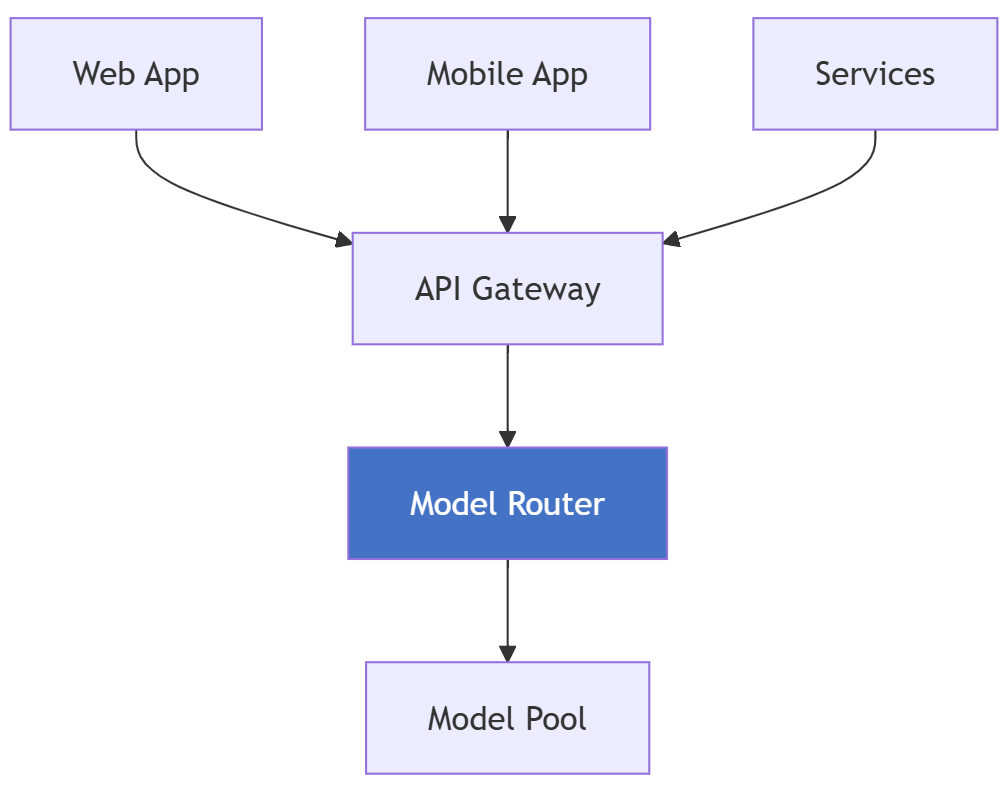

Model Router is a deployable AI chat model trained to automatically choose the best large language model for any incoming prompt - instantly and intelligently. Think of it as an orchestration layer with built-in brainpower.

When a request comes in, it analyses the prompt’s complexity, required capabilities, and your routing configuration. Based on that, it selects the most suitable model from its curated pool. Your application receives a standard chat completion response, along with metadata that tells you which model was ultimately used.

How Model Router Differs from Traditional Approaches

Traditional approach requires developers to handle everything manually:

Writing application logic to decide which model to call

Managing separate endpoints for every model

Implementing custom failover and retry mechanisms

Monitoring each model independently

Model Router simplifies all of this with an intelligent, unified layer:

One single endpoint for all model traffic

Automatic model selection based on the request

Built-in intelligence to balance cost and quality

Centralized monitoring, governance, and control

In short: less plumbing, more intelligence.

2. Why Architects Should Care: Real Business Value

Cost Optimization with Measurable Impact

Microsoft's testing showed up to 60% cost savings compared to using GPT-4.1 exclusively, whilst maintaining similar accuracy. Production deployments consistently validate these economics.

Consider a typical enterprise workload distribution:

Query Type | Volume | Traditional Cost | Router Selection | Router Cost | Savings |

Simple Q&A | 60% | $8.00/M | GPT-4.1 Nano | $0.40/M | 95% |

Moderate | 30% | $8.00/M | GPT-4.1 Mini | $1.60/M | 80% |

Complex | 10% | $8.00/M | GPT-4.1 | $8.00/M | 0% |

Blended | 100% | $8.00/M | Dynamic | ~ $1.52/M | ~81% |

Operational Benefits

Single Endpoint: One deployment means one set of content filters, one monitoring dashboard, one RBAC configuration, one quota management interface.

Automatic Failover: If the selected model is unavailable, automatic fallback to alternatives without application code changes.

Version Management: When models update, the router transparently switches to new versions. Application code remains unchanged.

Latency Optimization: Reasoning models are available for tasks that require complex reasoning, and non-reasoning models are used otherwise, keeping average response times optimal.

3. How the Model Router Works Internally

Here's what you need to understand about how it really works:

The Router Uses AI, Not Rules

Unlike traditional load balancers, Model Router doesn't use static rules you configure. Instead, it uses a trained AI model that:

Analyzes your incoming prompt

Evaluates query complexity and requirements

Considers your selected routing mode (Quality, Cost, or Balanced)

Selects the most appropriate model from your configured subset

The Three Routing Modes

You control routing behavior through three high-level modes

Mode | Behavior |

Quality | Prioritizes best-performing models for accuracy and capability |

Cost | Prioritizes cheaper models when they're sufficient for the task |

Balanced (default) | Balances cost and quality based on query complexity |

What You Can Configure

Important limitation: You cannot write custom routing rules or set token thresholds. Your configuration options are:

Routing Mode: Choose Quality, Cost, or Balanced

Model Subset: Select which models the router can choose from

Fallback Behavior: Automatic (built-in)

What you CANNOT do:

Set token-based thresholds ("use Model X for <200 tokens")

Define complexity-based rules manually

Configure cost tiers or custom scoring

Create conditional routing logic

Routing Lifecycle:

Simple, clean, AI-driven, and operationally elegant - Model Router is the easiest way to use the right model at the right time, automatically.

4. Architecture Patterns

Pattern 1: Single Unified Endpoint

Benefits: Single integration point, centralized auth, unified monitoring.

Pattern 2: Cost-Tiered Routing by Customer Segment

Different SLAs for different customer tiers without managing multiple codebases.

Pattern 3: Hybrid with Specialized Models

Combine Model Router for general queries with direct deployments for specialized needs requiring fine-tuned or specific models.

5. Best Practices

When NOT to Use Model Router

1. Single Model Sufficiency: If 95%+ queries need the same model, routing overhead isn't justified.

2. Fine-Tuned Model Requirements: Router cannot include custom fine-tuned models. Organizations with significant fine-tuning investments need hybrid architectures.

3. Ultra-Low Latency SLAs: Router adds 50-150ms overhead. Applications requiring <500ms p99 might prefer direct deployments.

4. Development Phase: During development, explicitly selecting models aids debugging.

6. Key Takeaways for Architects

AI-Driven, Not Rule-Based: Trust the router's AI to make routing decisions

Three Modes: Quality, Cost, Balanced - that's your primary control

Model Subset Selection: Choose wisely which models to include

Automatic Failover: Built-in, no configuration needed

Limitations Matter: No custom rules, no external models, context window limits

Cost Model Changing: Router usage will be charged starting Sept 2025

Final Thoughts

Model Router Is the Foundation of Multi-Model AI

We are entering a world where:

Models evolve monthly

New capabilities appear weekly

Pricing changes rapidly

Enterprises demand reliability + cost control

Architecturally, depending on a single LLM is no longer viable.

Model Router is becoming the load balancer, API gateway, and traffic manager for the AI layer - but with AI-driven intelligence, not static rules.

It won't solve every problem, but for the vast majority of multi-model scenarios, it's the most elegant solution available in Azure today.

If you found this useful, tap Subscribe at the bottom of the page to get future updates straight to your inbox.